Hi there AppWorks fans,

Welcome to a new installment of AppWorks tips.

How’s that for a headline!? 😁…My friend “ChatGPT” does this magic with a starting prompt like this: Make this title more clickbait: ...! Great, but this post is showing you a totally different concept…It’s about the concept of load-balancing a service container. Service container? Yes, those “things” in the ‘System Resource Manager’ artifact each related to a corresponding service group. Guess what…A service group can manage multiple service containers! So, it’s cloning time…

Let’s get right into it…

I do this post in relation of a current project where they run 2 nodes of the platform, and they would like to load-balance the e-mail service container over 2 service containers (one per each node). Well, a second node (in my opinion) is nothing more than just a second AppWorks instance installation (with a new TomEE application server). Comment me if I’m too simple on this statement (my multi-node installation is in the backlog for a new post). The load-balancing principles for multiple (cloned) service containers are managed by the service group. So, to evaluate our thoughts, we don’t really need a second node…Again, comment me otherwise!?

What we need is just one (e-mail) service container under one service group, make a clone of it, and connect it to the same AppWorks node/instance (in my case). With this configuration we can then play with the different “routing algorithms” to assess a large set of service calls with the “Test Web Gateway” artifact…Time for action!

First, an SMTP server to send out mail! Well, there is only one tool that rules the world here for us “developers”; That will be smtp4dev.

In quick steps:

- Download your package on your local laptop; I use Rnwood.Smtp4dev-win-x64-3.2.0-ci20221023104.zip

- Unzip it locally

- Find ‘Rnwood.Smtp4dev.exe’ in the root of the folder, and double-click it

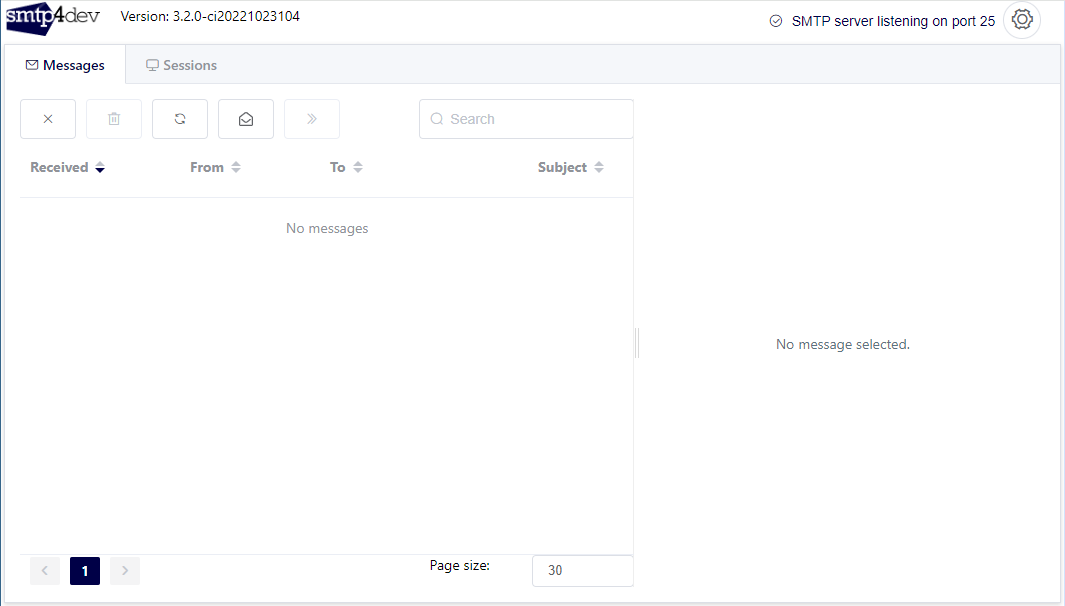

- DONE…Owh, and tune your browser in to URL http://localhost:5000 for a simple receiver-client:

…

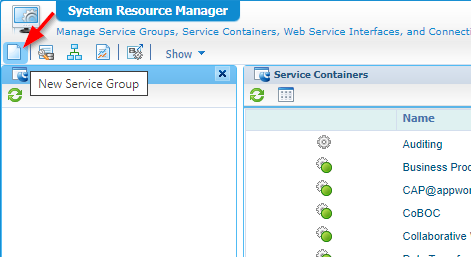

Great, now spin up your machine as move into your favorite organization. Open the ‘System Resource Manager’ artifact and create yourself a new service group with input from these steps:

- Connector type:

E-mail - Group name:

sg_mail- Select the one ‘Method Set Email’ service interface

- Notify yourself of the default ‘Routing Algorithm’; More on this setting later…

- Service name:

sc_mail_instance_1- Start up the service automagically

- Assign it also to the OS process (for now…)

- For the mail server itself

- Leave the incoming server as is (we don’t use/need it for our example)

- The outgoing mail is the IP of your local laptop (pingable from your AppWorks VM); Port is 25!

- Leave all the rest for what it is and finish up the wizard.

…

Great again! Time for a first test call on the ‘SendMail’ service operation via the ‘Web Service Interface Explorer’ artifact (evaluate the one from your service group as the ‘Shared’ space has also a service container of this type!). The message is formatted like this:

1 | <SOAP:Envelope xmlns:SOAP="http://schemas.xmlsoap.org/soap/envelope/"> |

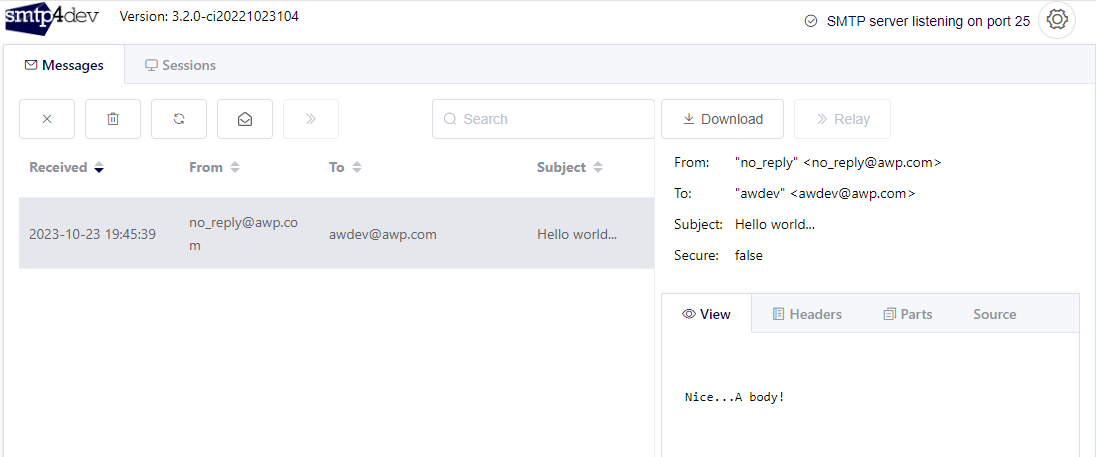

The same second I get a mail nicely dropped off in smtp4dev:

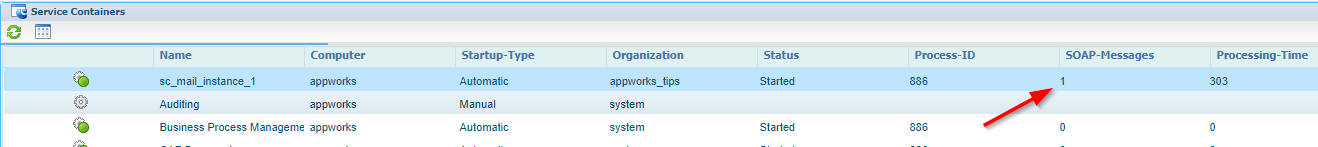

That’s also 1-0 for my service container:

Alright…PARTY ON!! 😎

…

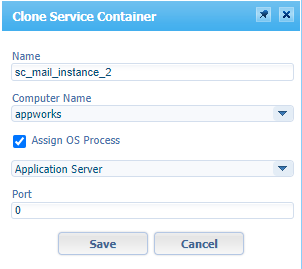

What’s next? Well, it’s cloning time:

Increase the number and save the new service container:

If you have a second node, you will see it in the ‘Computer name’ dropdown; The second dropdown with OS processes can be extended as well. An appworks node/instance can have multiple OS processes (just separate JVMs). You can add additional ones in the ‘system’ organization; Also, in the ‘System Resource Manager’ artifact…Not for now, but for my backlog!

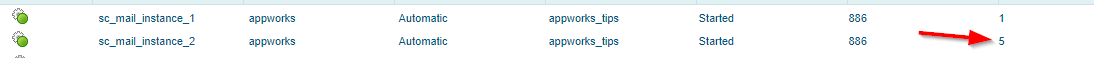

Make sure the service container shines green (incl. the first container) and hit it with a couple of ‘SendMail’ SOAP requests (from above!). I tried 5 of them and guess what:

They’re all executed from the second service container…WHAT!?!? Didn’t I notify myself of the ‘Simple load balancing’ routing algorithm??

Some background information after #RTFM:

- Simple load balancing - Requests are routed to the started service container under a service group in a round-robin way. Sounds like a solid solution for most use-cases to me!

- Simple Fail-over - Requests are routed to the first started service container under a service group based on their preference number!?!?; Yes, this number isn’t shown in the UI, but it’s available when you watch the service instance from a CARS/LDAP Interface explorer where it’s eventually saved. Again, not for now!

- Classic - Requests are routed to the service containers under a service group randomly without checking their state. Well, that “smells” classic indeed!

So, my default routing mechanism was selected already, but my service containers don’t behave like it!? What’s going on here? Well, I have no clue, but isn’t this strange? On heavy load systems this sounds to me like a crucial issue, but my 23.4 environment still behaves like this.

Continue the read as after architectural contact with OpenText support, it gets a very clarified spin…

…

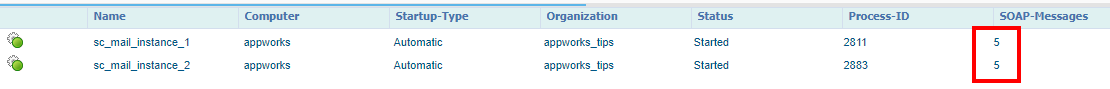

OK, but what if we really, really want this routing algorithm to work? Well, then detach them from the OS process! So, that’s exactly what I did; Watch the end-result after 10X calling the ‘SendMail’ service request and a restart of both service containers:

It’s a party; Exactly as expected! 🤠

Is it smart? Well, for my ‘E-mail’ service container we’re pretty safe (I guess), but comment me otherwise! Not all service containers can run without the OS process; Like the ‘Application Server Connector’ and ‘WS AppServer Connector’…Also here, comment me as I’m learning too as we go further down the drain of the AppWorks platform. I guess this also has to do with the “old” part of the platform based on business objects and the “new” part of the platform working with entities. It is what it is…Try out what works for you or ask OpenText for additional support for your specific request.

Final questions:

- How about other service containers? For cloned-OS-connected BPM service containers I saw the same result.

- And the other routing algorithms? They also started to work as documented all of a sudden once detached from the OS process.

The OpenText architectural spin

After a mail conversation and more investigation (also in the documentation), I get to a new conclusion on top of what we already concluded from above!! The above scenario is not really of this time anymore, but still good to see the outcome! If you want to scale properly, you need to add a second node (just a second installation on a new server). With a new node, new forces come into play which includes an external load-balancer that manages to load over the 2 TomEE instances! So, in the scenario from above, we would still clone a service container and connect it to a second node; AND you can connect it as well to the OS process for that node. The load-balancer settings in the service group are not followed as we now have an external load-balancer managing the load over the 2 nodes…Aha-moment for me! 🤔

…

So, when are the load-balancer settings on the service group followed? Read the best-ever-response from OT support:

=================================

The deployment architecture will be as follows:

- Client will communicate with an external load balancer (This is not the AWP load balancer!)

- The external load balancer will be configured to dispatch the request to each of the AWP nodes based on the algorithm chosen by the administrator

- Ideally, AWP nodes should be identical with regard to services that are running on them

- TomEE on the AWP node receiving the request will process the request

- AWP load balancer will not come into play if the AWP nodes are identical

- AWP load balancer will come into play if the nodes are not identical and the node that has received the request does not have the desired service configured on it…Aha-moment again! 🤗

- Service containers will be configured considering the expected load and the resources available on the system

The conversation included a special use-case that can be interesting for you as well:

- When it comes to a polling scenario (as in the case of a mail service container), both external and AWP load balancers are not coming into the picture as the request is not coming from any client!

- Poller thread of both service containers will be connected to the mailbox and will be sharing the work

- In case a message is picked up by both service containers the processing will be done by only one of them

- The service container that successfully inserts the mail record in the DB will process it; The other one will get a record already exists error and will skip the processing

=================================

Just another interesting thing for you to know; Some service containers (like BPM) have an option to scale in threads, so they can pick up more long-lived BPMs in parallel. For short-lived processes this is also possible, but that’s a secret setting in JMX. Contact OpenText support if you want further clarification on this.

For me, it’s a “DONE” on this post with a great thank you to OpenText support for the clarified answer. This definitely helped us to continue the discussion on a ‘Mail container’ struggle we had. I say, a great “cloned” weekend, and I CU in the next post on AppWorks Tips. Cheers! 🍷 (I know the AppWorks PO likes a good red wine…#metoo!…Ha-ha…busted!)

Don’t forget to subscribe to get updates on the activities happening on this site. Have you noticed the quiz where you find out if you are also “The AppWorks guy”?